Outreach is very important. here's what I, as Sound Extensions, have been doing recently...

|

| Synthesizerwriter at the Suffolk Show 2022 (The badge is deliberate - the 2020 and 2021 shows didn't happen for some reason...) |

For the last day of May, and the first day of June, 2022 (that's the 31st of May and the 1st of June, just to be sure...), I was at the Suffolk Show, on the 'Innovation Martlesham' technology innovation stand (huge thanks to BT (the telco, not Brian Transeau)), trying to inspire young minds to take up a career in technology. My aim was to get kids to think about sound in a different way, and so I had a ‘3D Sound Box’ interactive exhibit, where you put your hands into a box, moved a controller around, and put some headphones on (with other people listening in on other headphones (parents, siblings, etc.)). As you moved the controller, the sound changed in subtle and complex ways, and the reaction was uniformly amazing - eyes opened wide, smiles appeared on faces, and parents ended up dragging the kids away some time later. ‘Cool!’ was the standard comment.

People totally comprehended that when you moved the controller so that it pointed in a particular direction, you get a particular sound, and that you could blend from one sound to another by moving the controller to another position. Several people said that they were expecting just a few sounds and a simple mix between them, and they were not expecting a complex morphing from one sound to another...

Behind the scenes, a DJ Tech Tools MIDI Fighter 3D provided the position controller, with two additional infra-red position sensors providing Left-Right and Backwards/Forwards control. The position vectors were fed into Ableton Live, where a custom MaxForLive script changed the rather strange outputs into more understandable controls suitable for controlling parameters (The MIDI Fighter 3D is designed for finger drumming!). These parameters were then used to control the positioning of the four source units in the convolution triangle in Phobos from Spitfire Audio (and BT, (Brian this time!)!) (I use a lot of stuff from Spitfire Audio!). Ableton was sending a C3 drone note to Phobos, and it was set up to produce very different sounds for each of the extreme positions, with Ableton also adding a controlled low pass filter on one axis. The output of Phobos was then tweaked further, ending up in the Envelop Spatial audio plug-in, and the final audio was delivered to stereo headphones using binaural processing. The convolution synthesis in Phobos was a key part of making this different to ‘four sounds in space that you mix between’ - which is what was apparently expected. (A couple of people wanted the standard ’sound rotating around your head’ binaural demo, so I had a Live Set for that as well!)

The MIDI Fighter 3D

|

| The DJ Tech Tools MIDI Fighter 3D MIDI Controller |

The MIDI Fighter 3D is a finger drumming MIDI controller that has 16 expensive, high quality, 'arcade-console' style buttons/switches that are designed for long life and consistent operation in punishing situations - several millions of pushes (5 million+ in this case). Elektron have similar switches on their gear where the buttons are going to be 'mashed' a lot in normal use. In contrast, ordinary 'click' switches are small, very low cost, and are made of a metal dome that collapses when you press it, and have a life of a few tens of thousands of clicks for the cheapest, with over a hundred thousand clicks for the more expensive versions.

(The rubbery buttons that you find on a lot of gear are called membrane switches, and here the flexible bit deforms when you press it, which pushes a piece of conductive plastic (containing lots of carbon, so it looks black) onto a printed circuit board, and so makes a connection. These are very low cost, are easy to light up with LEDs, and are easy to replace (you just replace the sheet of flexible stuff and all those little blobs of conductive plastic), which is a good thing because they can wear out with a lot of use, and they don't like dust or ash or powders, and they are not very consistent in operation - you know how some of them can deform is strange ways, and sometimes get stuck? As for computer keyboard switches, then that's a whole topic all to itself!)

But this isn't a blog on switches! What the MIDI Fighter 3D also has, and the reason for the '3D' part of the name, is a 3D accelerometer which can detect movement on 3 axes: x, y and z. There are several modes of operation, but the one I used was the 'Edge Tilt' mode.In this mode, if you tilt the MIDI Fighter 3D, then it outputs MIDI Continuous Controller / Control Change (CC) messages that indicate how much it has been tilted relative to each of the four bottom edges.

|

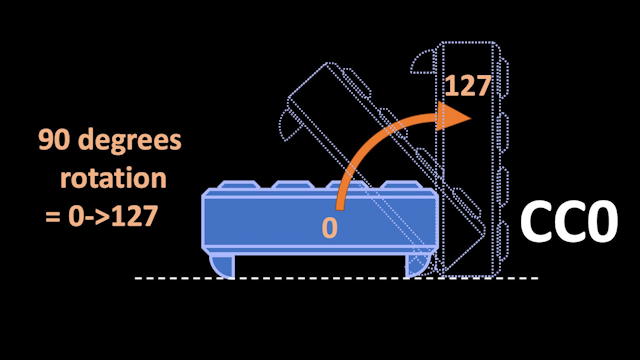

| Rotation on the right hand edge... |

So for the right hand edge, CC0 (zero) outputs a value of 0 (zero) when the MIDI Fighter 3D is horizontal (on a table, for example), and 127 when rotated clockwise by 90 degrees, so it is vertical.

|

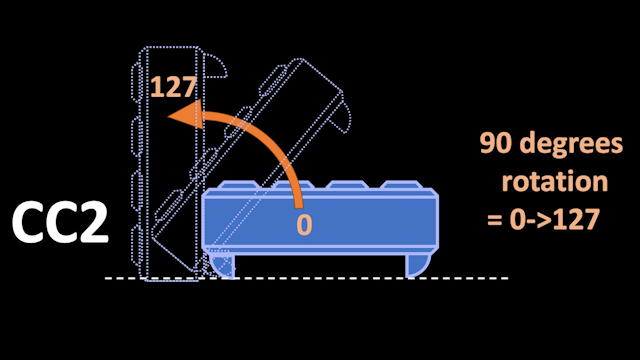

| Rotation on the left hand edge... |

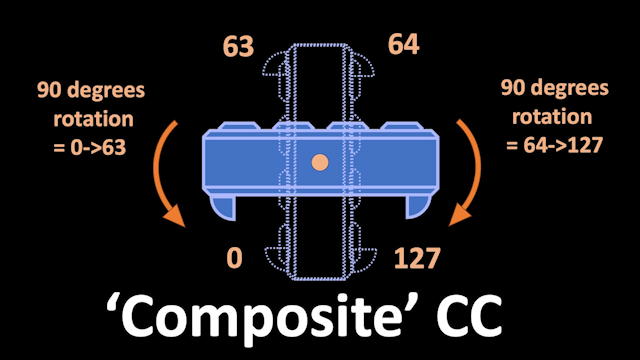

So, I wrote a little utility, in MaxForLive, that takes the CC messages, and processes them so that when you hold it in your hands instead of being on a surface, then it combines the 4 controller messages (CC0, 1, 2, and 3) so that they are combined into just a pair of messages where you can go from vertical, through horizontal and back to vertical again with a single continuous clock-wise (or anti-clockwise) movement, and the result is a 'Composite' controller message that goes from o to 127 (with a small 'dead zone' as it goes through horizontal). This works for left and right, as well as forward and backwards, so you get two composite controllers.

|

| A Composite CC made up of CC0 and CC2 (or 1 and 3) |

The 'inverse' composite controllers are also output from the Utility, so you get pairs of CC messages that go from 0-127, and 127-0, simultaneously, depending on how much you have tilted the MIDI Fighter 3D away from the horizontal. Not only does this feel 'right' when you hold it in your hands, but it allows you to control two different parameters as if the MIDI Fighter 3D was a cross-fader - but one that works in two dimensions instead of just one.

|

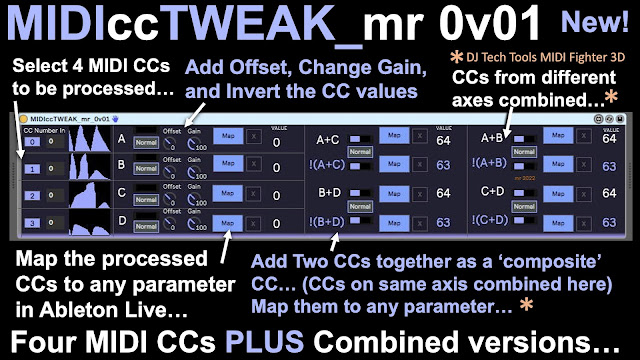

| The MIDIccTWEAK Utility written in MaxForLive... |

The MaxForLive Utility, MIDIccTWEAK, will work with any source of CC messages, and provides offset and gain control, plus inversion for the basic CC messages, then the paired 'composite' controllers, and finally paired 'composite' controllers using the 'other' pairing - for this application, it uses position sensors at 90 degrees to each other, instead of being on opposite sides of the MIDI Fighter 3D. That's 12 mappable outputs from the four CC sources.

|

| What can the MIDIccTWEAK Utility do? |

The MIDIccTWEAK Utility can be downloaded from MaxForLive.com...

Inside the M4L code...

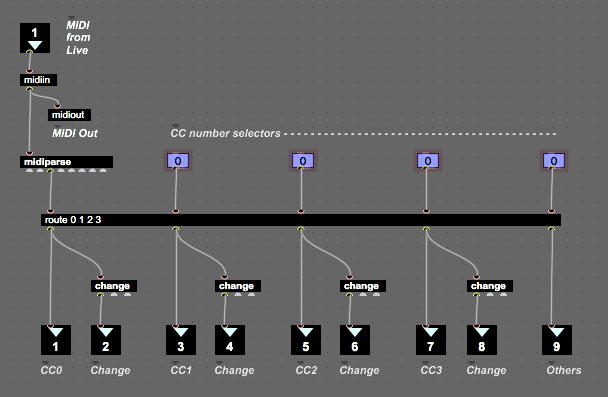

A couple of interesting things came up whilst programming MIDIccTWEAK, although neither of them are clever or unusual, which is usually when I include more details. Nope, in this case it was an interesting option and a potential trap for the unwary. Here's a fragment of the M4L code:

|

| Part of the M4L 'code' for MIDIccTWEAK... |

Now, most of this is perfectly ordinary: a MIDI In (...and an Out to provide a 'Thru' to the next MIDI device in the chain...), a MIDI Parse object to decode the MIDI messages, and a Route object to extract just the MIDI CCs that we are interested in. But note those 'change' objects - this was me testing out an idea to try to ensure that the MIDI bandwidth consumed by Controllers was minimised. The 'change' object filters out any repetitions of the same number, and so this code was imported from a prototype M4L device where I was monitoring several MIDI Controller hardware devices to see what their outputs looked like...

I was expecting to see some repetitions of the same controller value in the Controller Messages, and yes, I know that the alternative name for Continuous Controller messages is 'Control Change' messages, but I was still curious to see what actually happened. It turns out that everything that I looked at sent streams of messages of changes to MIDI Control values, and even when the faders, rotary controls, levers, and other physical controls where moved very slowly, then repetitions were still filtered out just about all of the time. In the past, I have seen at least one Mod Wheel where careful positioning of the wheel would produce continuous messages with two slightly different values, but not recently. I have talked about this before in this blog, but it is always interesting to see just what the difference in values (the 'delta') is in reality. Theoretically, it should be one, but this uses lots of MIDI bandwidth, and rapid changes of controller value are going to create long streams of controller messages that will just clog things up. So a more sensible approach would be to send controller messages at fixed intervals of time, so that there is lots of detail for slow movements, and less detail for fast movements. I suspect there is a 'rule of thumb' set of values for timing, but I have never done deep enough research into this. let me know if you want me to revisit MIDI Controllers and look at this aspect of them.

What ought to be useful about the 'route' object is the final output, where any messages that don't match a CC number are dumped - an 'else' or 'other' or 'default' output (it depends on which programming languages you are familiar with!). But I haven't ever needed a 'Controllers you haven't selected' detector. I suppose you could use it to look for unusual activity from controller numbers that shouldn't be outputting anything, but it just seems to be something that should have a use, but I haven't stumbled across it yet.

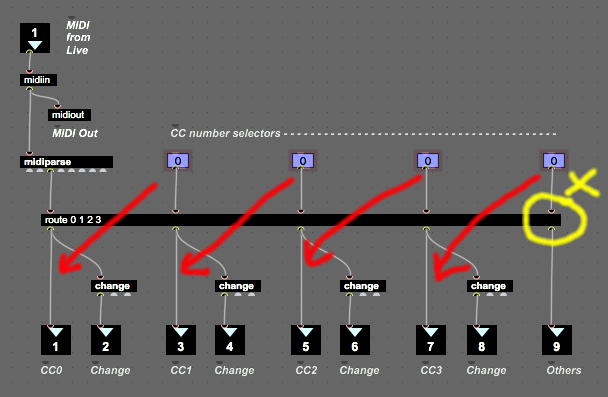

More interesting, and not restricted to only this M4L object, is a potential trap for then unwary. Look at the right most input and output - at the top, you have the number box for selecting which controller the 'route' object should be matching, and underneath, you have the 'Other' output, for when no Controller number message matches the specified numbers. Whoa!

|

| A potential trap for the unwary... |

Actually, those number boxes, which control the matches that appear at the outputs, are offset by one horizontally - to the left (because of the input on the top of the 'route' object). The second input determines what comes out of the first output of the 'route' object, and so on across the object. Lots of MaxForLive object are like this, when you look for it, and it is one of the things that you need to watch out for, especially when you extend object boxes very wide, as here (or wider!), so that you can keep all of the parallel output paths nest and tidy. If the left hand edge is off-screen, and you only look at the right hand edge, then the number box looks like it ought to be controlling the output underneath it - so the implied connection inside the yellow circle is not correct!

The MIDIccTWEAK Utility can be downloaded from MaxForLive.com...

Multiple controls

Inside the '3D Sound Box' used in the interactive exhibit, there are additional infra-red position sensors that are used to detect side-side movement (used to control left-right panning), and vertical (up/down) movement (used to control the overall tone of the audio), so there are at least four separate independent ways that you can control things just by picking up the MIDI Fighter 3D and moving it around inside the box.

|

| The 3D Sound Box... |

You can just see the MIDI Fighter 3D resting on the raised floor of the 3D Sound Box in the photo above.

Sound Generation

There was quite a lot of sound design behind the sounds that were produced. I wanted something subtle, sophisticated and very controllable, and I definitely didn't want to have a 'sound going round your head'-type of binaural demonstration, because those have always felt gimmicky and not very real world - I can't recall a real musical performance where position was that important or centre stage, with the exception of Tim Souster's 0dB concert at the Royal Northern College of Music in Manchester, in the late 1970s (and I was there!), where there was a lot of performing 'in the round'...

Instead, I chose a drone sound, and mapped the MIDI Fighter and the up/down sensor to changing the timbre of that sound. I used the Spitfire Audio / BT 'Phobos' polyconvolution synthesis virtual instrument to generate the sounds, inside Ableton Live. Four Source Units provided the inputs to three convolution synthesizers, with the controllers moving the four sources around in the convolution triangle. I also added a filter control so that anti-clockwise rotation changed the cut-off frequency of a low-pass filter (a cliche, I know, but it was very popular!), and added in some percussive sounds when the controller was tilted all the way backwards. All in all, there were lots of different timbres and smooth blends between them as you moved the MIDI Fighter 3D around inside the box.

I added a little bit of reverb to provide additional spatialisation, and then passed the various audio outputs into the headphones via Envelop binaural plug-ins. I used a Studiospares HAR-60 six channel headphone amplifier to drive six pairs of headphones - and Studiospares gave me an amazing deal for buying Mackie closed-back headphones in bulk!

People rapidly got how it worked! There's something about moving your hands making a sound change that activates a kinetic spatial memory thingy inside your head, and when the changes are complex and involving, then you get lost inside the soundscape. In a world where people don't seem to listen hard to music any longer, it was amazing to see people concentrating on what they were hearing. one pair of headphones was used by the 'driver', whilst the other headphones allowed other people (parents, friends, etc.) to listen in. In many cases, passive listening resulted in them wanting to 'have a go' as well!

What the 3D Sound Box represents is a first look at how a simple interface can be produced for multiple complex devices. If the MIDI Fighter 3D and the two infra-red sensors were separate controls, then using it would not be as intuitive, or as easy. During the whole two days, not a single person asked me how to move the MIDI Fighter 3D around to control the sound - they just did it!

Talking to parents, they were expecting 120 bpm, 4/4 EDM, and were totally surprised that someone would instead demonstrate something which was subtle, deep, sophisticated and sounded high quality - I explained that the core of the sound came from one of the top sample library companies in the world… So the sound design that I did worked very nicely. Kids (and their parents) loved the exhibit - one family said that it was the best thing they had seen (heard!) all day!

Outreach

The success of an interactive exhibit like this is about reaction at the time, and then about follow ups. In the two days, I got huge numbers of smiles ('This is the first time I've seen a smile like that for ages!' was one parent's comment...) and a universal 'Cool!' reaction from just about everyone, especially young kids. I have been invited to schools to do more demos, asked by a local media group if I would be interesting in a collaboration, and the local university is also interested in talking to me more about this type of interaction and how to programme it.

So, my grateful thanks to Spitfire Audio and BT for Phobos, because it was a key part of a very successful exhibit that may well have influenced more than 100 young minds into thinking about sound in a different way. Also thanks to Ableton for Live, and especially MaxForLive, a toolkit that I use all the time! Thanks to the amazing guys at DJ Tech Tools for their MIDI Fighter 3D controller. Thanks to Studiospares for a beautifully thought-out headphone amplifier. And finally, thanks to Envelop for making spatial audio so accessible in Ableton Live! Oh, and thanks again to BT (the telco) and Innovation Martlesham for making this all possible!